How I Structure a Claude Code Session to Actually Ship

A practical breakdown of how to open, run, and close a Claude Code session so the agent stays on track and the output lands in production.

Most of my early Claude Code sessions ended the same way: something worked, but I could not explain what the agent had actually changed, and I was nervous to commit. The problem was not the model. It was that I had no structure around the session itself.

Over the past few months I have settled on a repeatable shape for agentic coding sessions that consistently produces output I can review, understand, and ship.

Before You Open the Session

The most important work happens before you type anything. If you open Claude Code and immediately say "fix the auth bug," you are asking the agent to define the problem for you. That rarely ends well.

Instead, spend two minutes writing down:

- What exactly is broken or missing, in one sentence

- Which files are most likely involved

- What done looks like — the acceptance criterion

This does not need to be formal. A comment in a scratch file is fine. The point is that you have made a decision before the agent starts, not after.

Opening the Session

Start with context, not instructions. Claude Code reads your CLAUDE.md automatically, but it does not know what you were doing yesterday. A short framing message helps:

I am working on the admin auth flow in middleware/auth.go.

The issue: API key validation is being skipped when the Authorization header

is present. I want to fix this without touching the JWT path.

Then let the agent read the relevant files before you ask it to change anything. If you jump straight to "edit this file," the agent may make plausible-looking changes that miss the actual constraint.

During the Session

Keep the task scope tight. One logical change per session is a good rule of thumb. If the agent surfaces a related problem — a missing index, an unhandled error, a naming inconsistency — note it somewhere and stay on the original task. Scope creep in agentic sessions is hard to review and easy to regret.

Watch the tool calls, not just the output. When Claude Code reads a file, checks a definition, or runs a command, those actions tell you whether the agent has understood the problem. An agent that edits a file without reading it first is guessing.

If the agent gets stuck or starts repeating itself, stop and reframe. Do not add more context on top of a confused session. Clear the state and re-approach with a narrower prompt.

Reviewing the Output

Before you accept any changes, run git diff and read every line. This sounds obvious, but it is easy to skim when the agent summary sounds confident. Agents can produce correct-looking output that solves the wrong problem.

Things to check:

- Did the change stay within the scope you defined?

- Are there any new dependencies or imports that should not be there?

- Does the change break any obvious invariants in adjacent code?

- Are there debug logs, commented-out blocks, or temporary hacks left behind?

If something looks off, ask the agent to explain the specific line rather than re-running the whole task. Explanation is cheap; a second round of edits is not.

Closing the Session

Commit with a message that captures intent, not just what changed. "Fix API key validation order in AdminAuth middleware" is more useful than "update auth.go." The commit message is where you record what the session was actually about.

If the session produced drafts or partial work that you are not ready to commit, stash or branch it. Do not leave an agent session open indefinitely hoping to pick it up later. Context degrades, and you will spend more time re-orienting the agent than if you had closed cleanly and started fresh.

The Pattern That Works

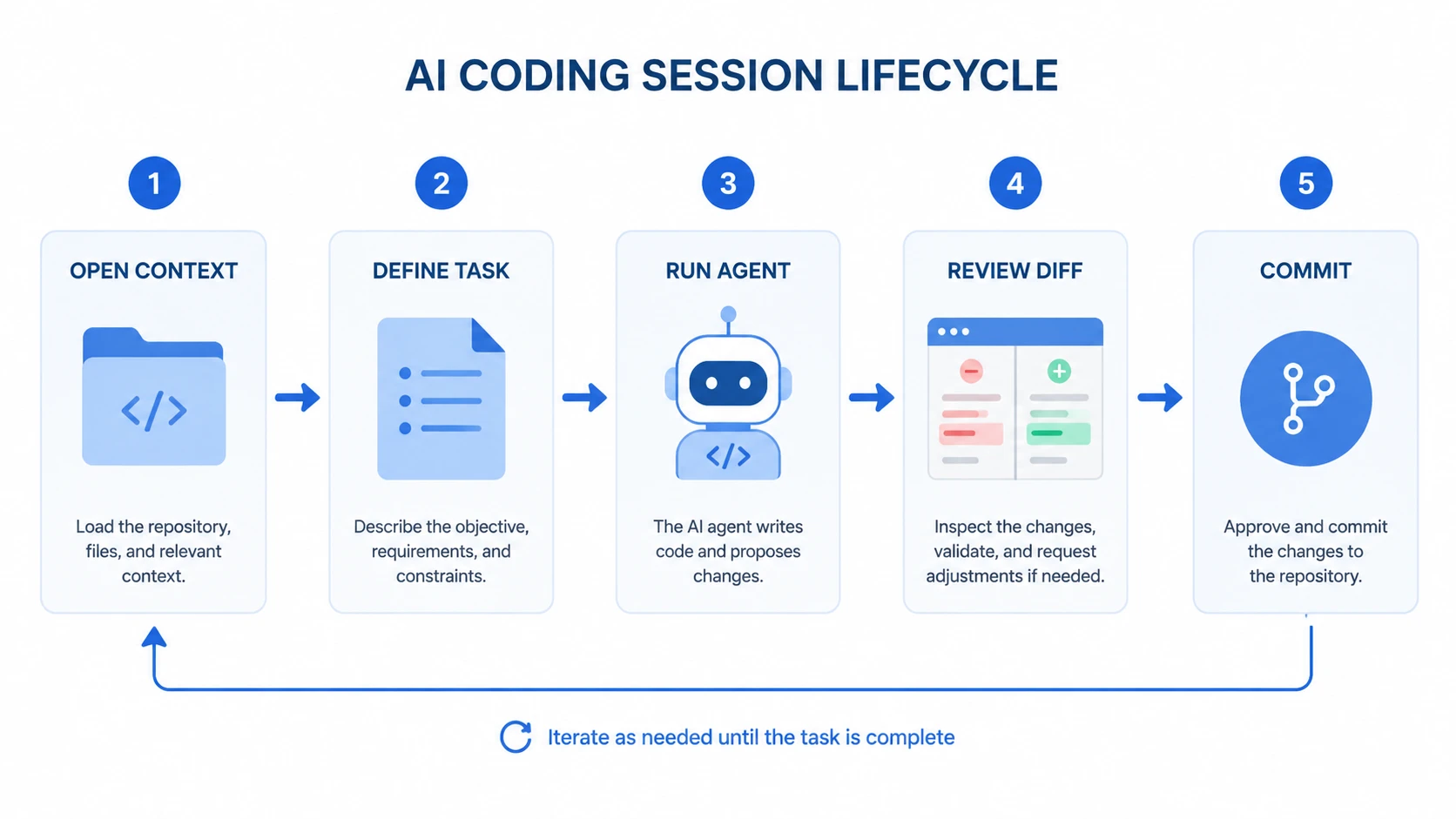

The sessions that ship reliably share a structure: clear pre-session definition, focused scope, active monitoring during execution, careful diff review, and a clean close. None of this is unique to Claude Code. It is the same discipline that makes any code review or pair programming session productive.

What changes with an AI agent is the speed. The agent can produce a lot of output quickly, which means mistakes compound faster too. Structure is what keeps that speed from becoming a liability.